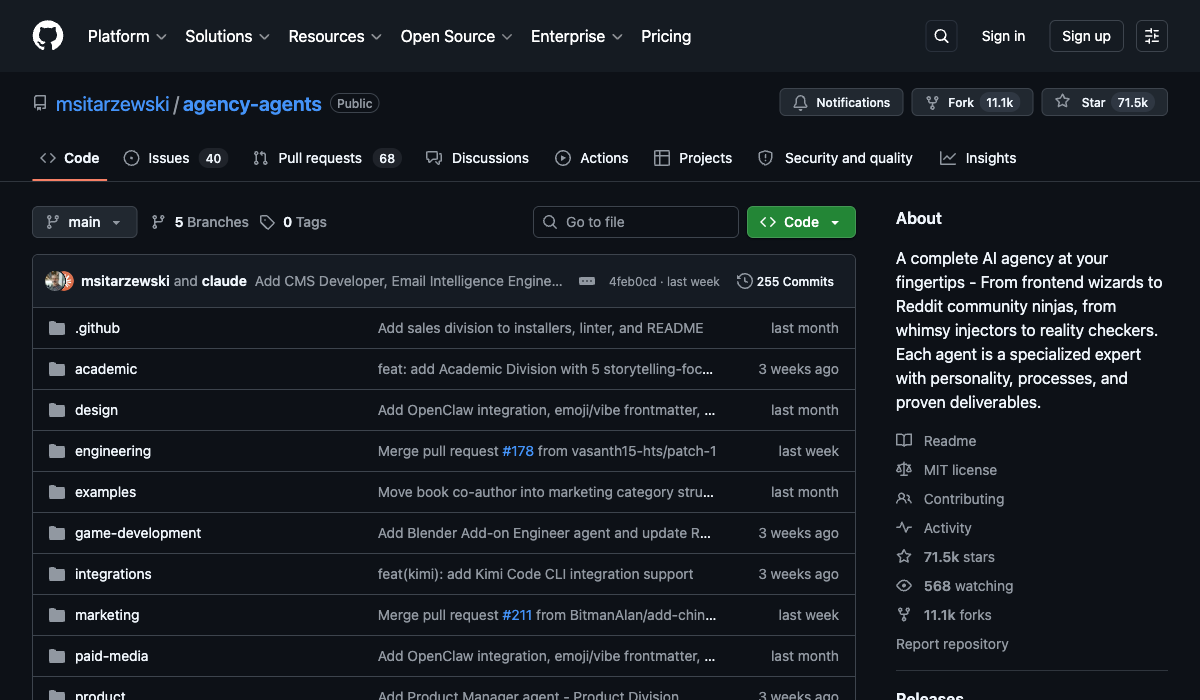

71,500 stars in six months. Eleven thousand forks. For a repo with zero runtime code.

agency-agents is a collection of 178 markdown files, each defining a specialised AI agent persona — Backend Architect, Security Engineer, CMS Developer, Game Dev, even a "Whimsy Injector" (yes, really). You drop one into your coding tool and your generic AI assistant suddenly has opinions about database schema design instead of hedging every answer with "it depends."

The whole thing runs on Claude Code, Cursor, Aider, Windsurf, and about ten other tools. There's no server. No database. No npm install. Just markdown with a conversion script.

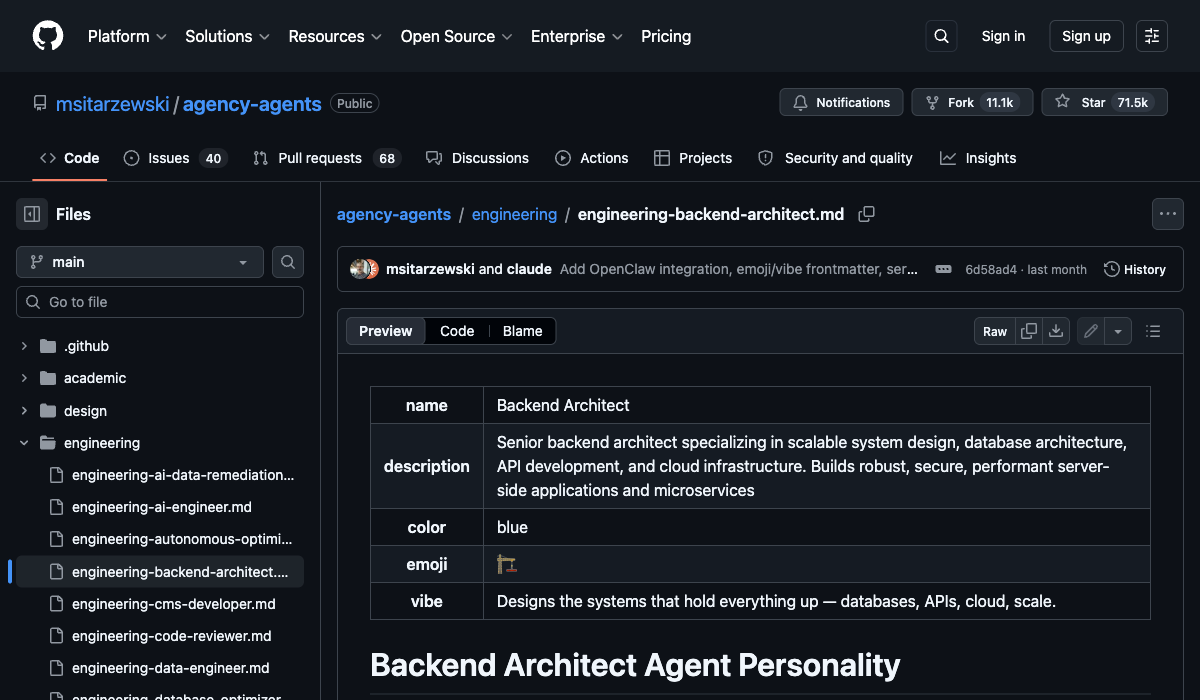

Agents as characters, not configs

What caught my eye wasn't the agent count — it was the structure. Each agent file has YAML frontmatter with fields you wouldn't expect: color, emoji, and vibe.

The Backend Architect's vibe? "Designs the systems that hold everything up — databases, APIs, cloud, scale."

This is borrowed from game narrative design. Give a character a personality and it behaves more consistently than one that's just been handed a job description. The agents don't just list technical skills — they define how the agent communicates, what it prioritises, and what its "critical rules" are.

It's a pattern I haven't seen many people use well in prompt engineering. Most of us write system prompts that read like a requirements doc. These read more like character sheets.

The one that doesn't write code

Most of the agents do what you'd expect — a Frontend Developer writes frontend code, a DevOps Automator sets up pipelines. Fine.

But the Workflow Architect agent is different. It doesn't produce code at all. It maps every path through a system — happy paths, failure modes, recovery actions, handoff contracts — before anyone writes a line. Spec-first, code-second.

There's also an Agents Orchestrator that coordinates multiple agents in sequence with quality gates and retry logic, all defined in markdown. Dev builds something, QA reviews it, if it fails it goes back with specific feedback, up to three retries per task. The pipeline itself is a readable spec.

That's the bit worth paying attention to. Not "here's 178 agents" but "here's a way to compose agents into workflows with built-in feedback loops."

graph LR

A[Workflow Architect] -->|spec| B[Backend Agent]

B -->|code| C[QA Agent]

C -->|pass| D[Integration]

C -->|fail| B

The reality check

The creator, msitarzewski, owns about 46% of the commits. That's a lot of bus factor for a repo this popular. There are 40 open issues and 68 pull requests, which suggests the community is contributing but the review bottleneck is real.

There's no quality benchmarking either. Every agent has "success metrics" defined in its file, but nobody's measuring whether the Backend Architect actually produces better schemas than a generic prompt. The claim is that specialised agents reduce hallucination and improve coherence — and that tracks with what I've seen — but there's no data backing it up here.

It's all in git though, so if you want to track how an agent evolves you can just watch the file history. That's the upside of everything being plain text — diffs are easy and forks are cheap.

The names can sound a bit silly at first glance. "Reddit Community Builder"? But crack it open and it's actually practical — there's a 90/10 rule (90% value-add content, 10% promotional max), specific subreddit research workflows, AMA coordination playbooks, and success metrics like maintaining 85%+ upvote ratios on educational content. It reads less like a meme and more like a community marketing handbook you'd hand to a new hire.

What you'd actually do with this

You're probably not going to import all 178 agents. That would be mad.

The real value is seeing the pattern. A good agent file is:

- A personality with a vibe and communication style

- Critical rules that act as hard constraints

- A defined workflow with specific deliverables

- Success metrics so you know when it's done

Once you see it laid out like that, you realise you could write two or three agents that match your actual codebase and workflow — and they'd be more useful than the entire collection.

I've already nicked the vibe field idea for my own Claude Code agents. It's a small thing but it changes how the agent approaches problems. Give it a personality and it stops sitting on the fence.

Setting it up with Claude Code

The repo was built for Claude Code, so there's no conversion step. The markdown files work as-is.

Clone and copy the agents you want:

git clone https://github.com/msitarzewski/agency-agents.git

mkdir -p ~/.claude/agents

# grab individual agents

cp agency-agents/engineering/engineering-backend-architect.md ~/.claude/agents/

cp agency-agents/engineering/engineering-code-reviewer.md ~/.claude/agents/

Or if you want to shotgun the whole engineering division in:

cp agency-agents/engineering/*.md ~/.claude/agents/

There's also an install script if you want the lot:

./scripts/install.sh --tool claude-code

Using an agent in a session:

Once the files are in ~/.claude/agents/, you just reference the agent by name in your prompt:

Activate Backend Architect and review this API schema.

Use the Code Reviewer agent to go through my last PR.

Claude Code picks up the personality, critical rules, and workflow from the markdown file. You'll notice the responses change — more opinionated, more structured, less "it depends."

The one thing I'd actually recommend: Don't install all 178. Pick two or three that match what you're building right now. The Backend Architect and Code Reviewer are solid starting points for most codebases. Try them for a week, see if the output improves, then decide if you want more.

And if you want to write your own — which you probably will after seeing the format — just create a new .md file in ~/.claude/agents/ with the same frontmatter structure. The vibe field is optional but worth adding. It's the difference between an agent that follows instructions and one that has a point of view.

The verdict

The approach is smarter than the repo. Personality-driven agent prompts work. The orchestration pattern with quality gates is worth studying. The Workflow Architect is a genuinely good idea.

But 178 agents is a catalogue, not a toolkit. The ones you'll actually use are the two or three you write yourself after seeing how these are structured.

Clone it, read five agent files, steal the format, write your own. That's the move.

Comments